Update 6th July 2023 – Our paper entitled “CORE-GPT: Combining Open Access research and large language models for credible, trustworthy question answering.” has been accepted to TPDL2023 and will be published in the LCNS series by Springer.

The public release of ChatGPT-3 in November last year captured the public’s imagination and turned this technology into front page news overnight. Only this week we saw the release of its much more powerful sibling, ChatGPT-4. In just a few short weeks there have already been some frankly startling demonstrations of the capabilities of these models, from writing poetry to code completion amongst many others.

Today we’re excited to unveil a project we’ve been working on here called CORE-GPT. The CORE-GPT application is a step change in academic question answering. Our key development is that the provided answer is not just drawn from the model itself, as is done with ChatGPT and others, but is based on, and backed by, CORE’s vast corpus of 34 million open access scientific articles.

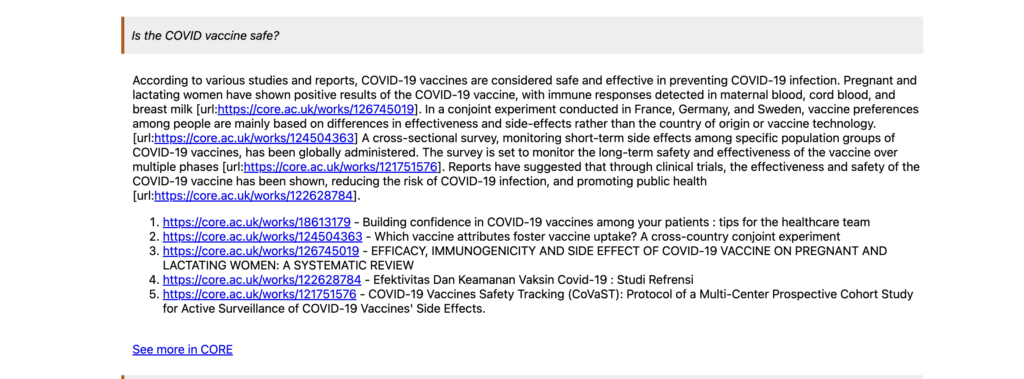

The answers provided by CORE-GPT are accompanied by citations to the scientific articles on which the answer is based. Links to the PDF version of these papers are also given so the reader or researcher can then quickly refer to these and also find other relevant articles.

Generative AI and Large Language Models are powerful, but far from perfect. They can provide extremely authoritative sounding answers, whilst actually just making stuff up completely. The modellers call these erroneous answers ‘hallucinations’ and they present a tricky problem. If the answers provided by these models might be spurious, this immediately makes their output far less credible or trustworthy.

CORE-GPT offers a solution to this problem. By ensuring that answers given are derived exclusively from scientific documents, this largely eliminates the risk of generating incorrect or misleading information. Further, by providing direct links to the scientific articles on which the answer is based, this greatly increases the trustworthiness of the given results.

We’re really excited by these latest developments and we are currently working on effectively scaling CORE-GPT so we can bring it to our 20 million monthly users very soon.

Dr. Petr Knoth, Head of CORE and Senior Research Fellow in Text and Data Mining at The Open University commented: “For over 10 years, we have been at CORE raising the awareness of the potential of Natural Language Processing over scientific corpora. The significance of CORE-GPT is its ability to produce answers backed by reputable open scientific literature, largely limiting the danger of generative language models producing spurious answers due to factually incorrect content or misinformation in the training corpora. CORE-GPT is opening a new research area into how we can best utilise language models to serve our society, by improving access to and the understanding of research outcomes for all.”

Professor John Domingue, who is leading on several projects using ChatGPT to enhance OU learning and teaching added, “I am very excited about the potential of CORE-GPT within the OU teaching context. We have just launched a new pilot using ChatGPT to semi-automate the writing of high quality course materials. I can easily envisage OU academics using CORE-GPT to update OU courses according to new results or students wishing to find out more on an advanced topic and then immediately receiving an explanation written in a language they can understand. I truly believe that this type of technology has the potential to radically transform Higher Education for the benefit of all of our students.”

————————

CORE is part of The Knowledge Media Institute (KMi), a diverse and multi-disciplinary research lab at The Open University.

CORE provides access to the world’s largest collection of almost 300 million open access scientific articles, including 34 million full text research papers, collating and indexing research from repositories and journals. It is a not-for-profit service operated by The Open University. CORE is dedicated to the open access mission and is one of the signatories of the Principles of Open Scholarly Infrastructures POSI.

Author: Dr. David Pride