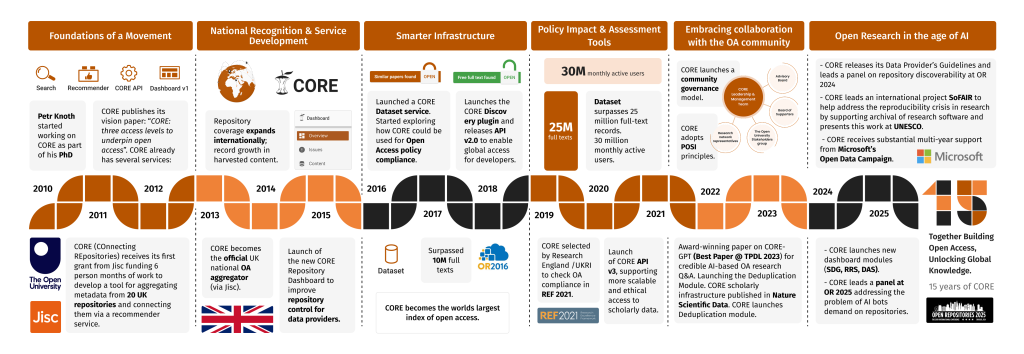

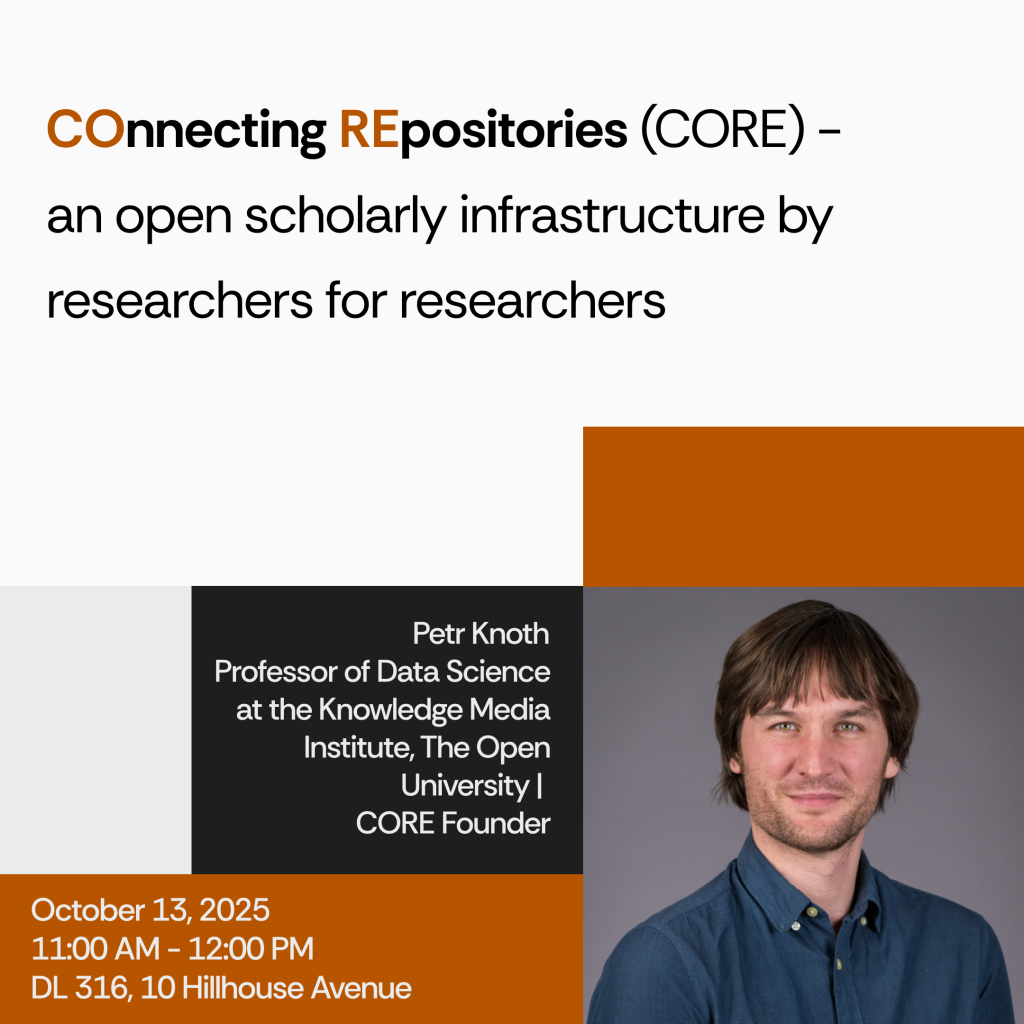

We’re pleased to share that Professor Petr Knoth, Founder and Head of CORE (core.ac.uk) and Professor of Data Science at The Open University’s Knowledge Media Institute, will be giving a Computer Science Talk at Yale University on 13 October 2025.

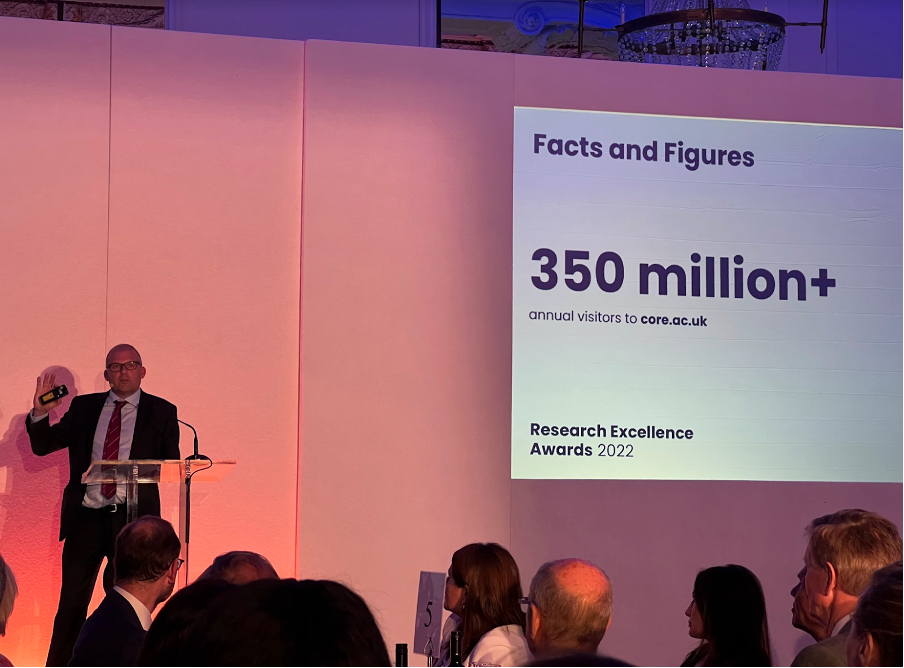

In his talk, titled “COnnecting REpositories (CORE) an open scholarly infrastructure by researchers for researchers,”Petr will introduce CORE’s role in advancing global open scholarship. He will highlight how CORE supports discoverability, interoperability, and machine access to research outputs, while also showcasing innovations from the Big Scientific Data and Text Analytics Group (BSDTAG) including CORE-GPT, SDG: Classify, and SoFAIR.